A paradigm shift in mathematics

How AI is poised to reshape the discipline beyond recognition

Way back in 2004, I wrote an essay in Swedish whose title, translated word-by-word into English, reads A paradigm shift in mathematics?. The difference compared to the title of the present text is only a single punctuation mark, but what a difference that makes, because the question mark serves effectively as a negation. My essay back then was a critical response to the book Dreams of Calculus by colleagues Johan Hoffman, Claes Johnson and Anders Logg, who predicted an imminent revolution in mathematics where, from then on, numerical analysis via the finite element method would sit firmly at the center of everything we mathematicians do, including education at all levels.

I recall some suggestions at the time that my judgment reflected a conservative temperament, but I believe that the subsequent two decades vindicated my view: rather then a wholesale transformation of the discipline, what we saw was a slow but steady continuation of the trend already underway since the 1950s, towards increased use of numerical computing on electronic devices. And I believe that I am capable, at least in some cases, of recognizing a paradigm shift when it truly is about to happen.

Such as now.

Unless I misremember, the first time I spoke publicly about the possibility of a near-term AI-driven revolution of the discipline of mathematics was in a panel discussion on March 2, 2023, where I suggested not only that one day AI could be able to independently write a passable PhD dissertation in mathematics, but also that this might well happen within a couple of years.1 At least one professor of computer science in the audience found my suggestion preposterous. And indeed, March 2, 2025, (the deadline he extracted from the strongest possible reading of my statement) came and went without any such breakthrough.

Nevertheless, the period since the panel discussion in 2023 has seen remarkable progress in the mathematical competence of large language models (LLMs). Just weeks after our meeting, GPT-4 was released, and scored at the 89th percentile on the math section of the American SAT test. This was a huge leap forward compared to earlier models, but still nothing compared to what its successor GPT-5, released in August 2025, would achieve. Less than two months after the release, both computer scientist Scott Aaronson and mathematician Terence Tao (who are both world-leading in their respective fields) announced new mathematical results where GPT-5 had helped with key insights in parts of the proof.

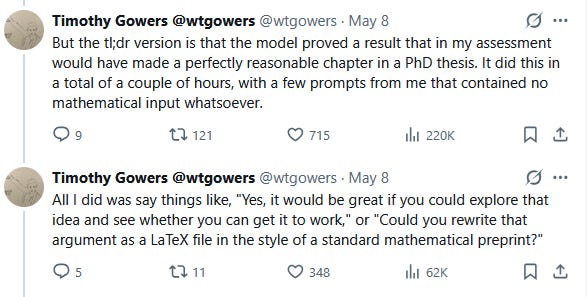

Subsequent developments in 2026 have been very fast, to the point of being hard to keep track of. Starting in January, various LLMs have independently solved open research problems from the iconic list known as the Erdős problems.2 Legendary computer scientist Donald Knuth has a lovely paper on how, in February-March, he was scooped by Claude Opus 4.6 and GPT-5.4 Pro in solving a problem about cycles in directed graphs he had been working on. And just a few days ago, on May 8, another world-leading mathematician, Timothy Gowers, announced the outcome of his experiment to give a bunch of research questions he was interested in to GPT-5.5 Pro to work on. Here is his Twitter summary of what happened.

This seems to me very close to the “independently write a passable PhD dissertation in mathematics” milestone I suggested in March 2023. On his blog, Gowers, together with MIT student Isaac Rajagopal (whose work GPT-5.5 Pro was building on), explain at greater length what happened.

All of this is tremendously exciting, but what are the implications for the mathematical community? Let me quote Gowers’ reflections in the final section of the blog post at some length:

I would judge the level of the result that ChatGPT found in under two hours to be that of a perfectly reasonable chapter in a combinatorics PhD. It wouldn’t be considered an amazing result, since it leant very heavily on Isaac’s ideas, but it was definitely a non-trivial extension of those ideas, and for a PhD student to find that extension it would be necessary to invest quite a bit of time digesting Isaac’s paper, looking for places where it might not be optimal, familiarizing oneself with various algebraic techniques that he used, and so on.

It seems to me that training beginning PhD students to do research, which has always been hard (unless one is lucky enough, as I have often been, to have a student who just seems to get it and therefore doesn’t need in any sense to be trained), has just got harder, since one obvious way to help somebody get started is to give them a problem that looks as though it might be a relatively gentle one. If LLMs are at the point where they can solve “gentle problems”, then that is no longer an option. The lower bound for contributing to mathematics will now be to prove something that LLMs can’t prove, rather than simply to prove something that nobody has proved up to now and that at least somebody finds interesting.

[…]

Of course, everything I am saying concerns LLMs as they are right now. But they are developing so fast that it seems almost certain that my comments will go out of date in a matter of months. It is also almost certain that these developments will have a profoundly disruptive effect on how we go about mathematical research, and especially on how we introduce newcomers to it. Somebody starting a PhD next academic year will be finishing it in 2029 at the earliest, and my guess is that by then what it means to undertake research in mathematics will have changed out of all recognition.

My best guess right now would be that within the next 6-12 months, any PhD student in mathematics will, if he or she wishes to do so, be able to produce what up to now has been considered a perfectly fine PhD thesis in mathematics in no more than a week.3 When this happens, senior faculty will of course also be able to similarly 100x their productivity. I think these changes might even come about in the hypothetical (and very unlikely) scenario where AI development hits a glass ceiling literally today and we never get any more powerful LLMs. It might suffice that leaders like Gowers and Tao4 publish broadly accessible prompting manuals for how to get the most math out of these machines.

From my knowledge of the mathematical community and of human nature more broadly, I have no doubt that many mathematicians who are fond of the good old-fashioned way of doing research and have been doing it for decades will stick their head in the sand and try to go on like before, without AI assistance, for as long as they can. This may well delay the radical transformation of research environments in mathematics a bit, but probably not by very much, because once a substantial fraction of their colleagues pick up on the superpowers that AI assistants can give them, the situation becomes highly unstable. I will follow the developments over the next year or two at my own department at Chalmers, as well as at other research-oriented mathematics departments, with great interest and a bit of concern.

Note, finally, that I haven’t said a word in this blog post about the possibly just as radical implications of AI for the other main part of what we do at mathematics departments: undergraduate education. And there are, of course, the kinds of AI risk that I talk about more often and that hit everyone equally, including us mathematicians. We are in for a wild ride.

The term “near-term” is load-bearing here, because I had speculated earlier about a future such revolution, albeit not on such near-term time scales.

Timothy Gowers comments on this:

Initially it was possible to laugh this off: many of the “solutions” consisted in the LLM noticing that the problem had an answer sitting there in the literature already, or could be very easily deduced from known results. But little by little the laughter has become quieter. The message I am getting from what other mathematicians more involved in this enterprise have been saying is that LLMs have got to the point where if a problem has an easy argument that for one reason or another human mathematicians have missed (that reason sometimes, but not always, being that the problem has not received all that much attention), then there is a good chance that the LLMs will spot it. Conversely, for problems where one’s initial reaction is to be impressed that an LLM has come up with a clever argument, it often turns out on closer inspection that there are precedents for those arguments, so it is still just about possible to comfort oneself that LLMs are merely putting together existing knowledge rather than having truly original ideas. How much of a comfort that is I will not discuss here, other than to note that quite a lot of perfectly good human mathematics consists in putting together existing knowledge and proof techniques.

The qualifier “my best guess” here can be contrasted with my remark in March 2023, which concerned the lower end of my subjective probability distribution of the time until advanced AI.

Terence Tao seems to spend much of his time these days figuring out efficient workflows for developing mathematics using LLMs; see, e.g., his recent and highly illuminating conversation with Dwarkesh Patel.

I wonder how long researchers in mathematics will be needed at all. Unlike athletes or board game players, whose competitions have entertainment value despite not standing a chance against machines, the work of these researchers is of interest only for themselves. Therefore, if they no longer will be able to significantly contribute to the results by AI (which will also be capable of choosing the most interesting problems to work on), they may need to look for other jobs.